Posts

category: Nuke

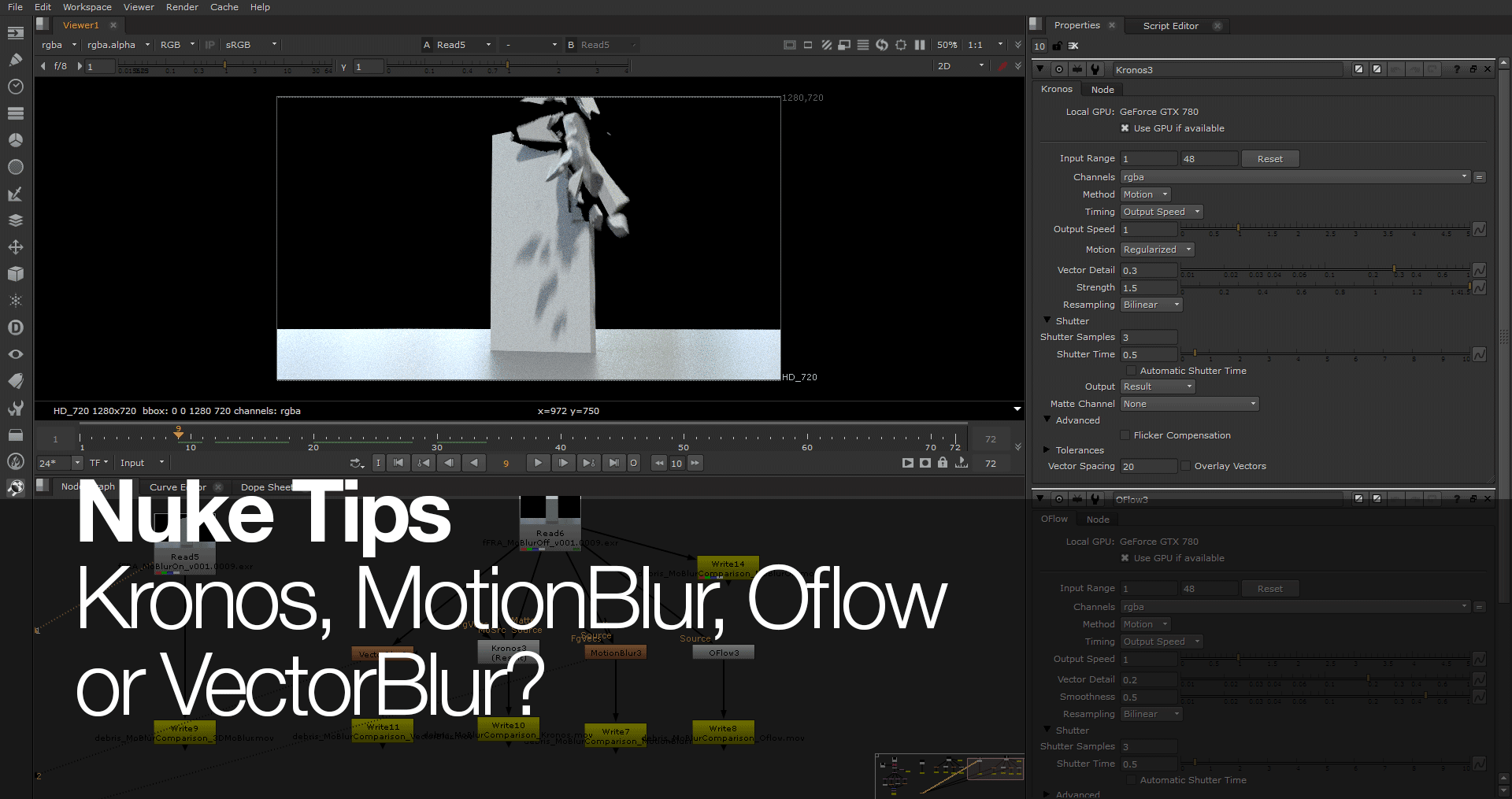

Nuke Tips – Kronos, MotionBlur, Oflow, or VectorBlur?

Which motion blur nodes to use for FX elements? Kronos, MotionBlur, Oflow or VectorBlur? Read on to find out the difference between 3D motion blur or adding post motion blur in Nuke.

Nuke 1001 – Practical Compositing Fundamentals (3DCG)

Guide to practical compositing fundamentals in Nuke for 3DCG project.

Recreate After Effects Settings in Nuke (and vice versa)

Recreate After Effects Settings in Nuke? Why? Tell me why?

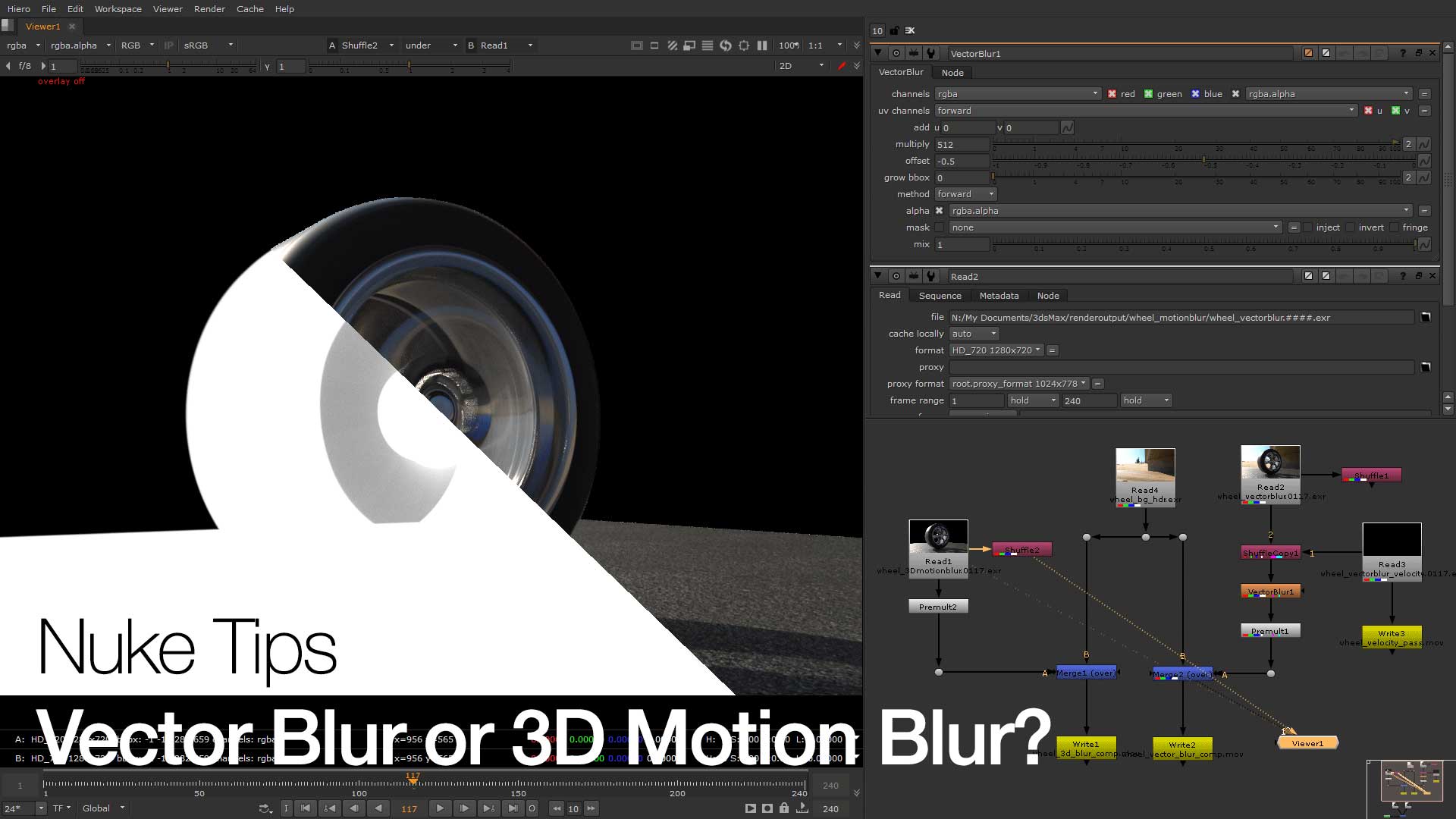

Nuke Tips (of the month) – Vector Blur or 3D motion blur?

Everything is so blurry between Vector Blur or 3D Motion Blur in this Nuke Tips.

Quick Intro to 2D Tracking in After Effects and Nuke

A quick intro to 2d tracking in After Effects and Nuke for beginners to both compositing and matte painting work.

Tackling Common Issues in CameraTracker

Ever wonder how to tackle common issues in CameraTracker? This post explore the various issues when tracking in CameraTracker.

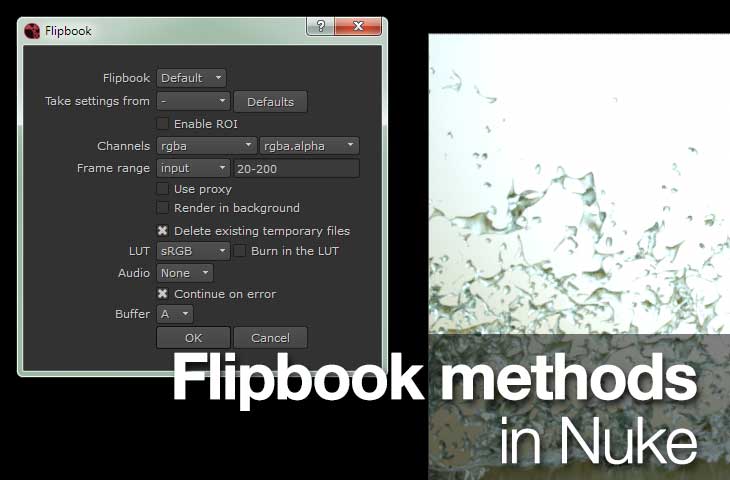

Nuke Tips – Flipbook Methods in Nuke

This post explores the many flipbook methods in Nuke.

Nuke Tips – Noise in Nuke

Quick rundown of the noise node in Nuke and its usage in typical compositing job.

Incriminate Behind the Scenes – Part 1

Part one of Incriminate behind the scenes covering the initial pre-production and software. Also include outtakes video.