Posts

category: Nuke

H.264 Compression Comparison

H.264 compression is tricky when exporting your beauty render from Nuke with minimal loss. Comparison GIF and guidelines in the article.

A guide to Nuke Non-Commercial

Want to learn Nuke and wondering what’s the catch with Nuke Non-Commercial? This guide covers the limitations and alternative solution.

Nuke Tips – Render Order

A case study of using Render Order in Nuke and explanation of its usage in a pipeline.

Nuke Tips – Masking in Nuke

This post explains what you can do and not with masking in Nuke. Include samples and project files for your exploration.

Nuke Tips – Flare Node

Exploring the flare node in Nuke and the practical usage in your daily Nuke compositing.

Day to Night Matte Painting Thought Process – Video Podcast (Episode 0)

Summary of my thought in creating a day to night matte painting in video podcast format.

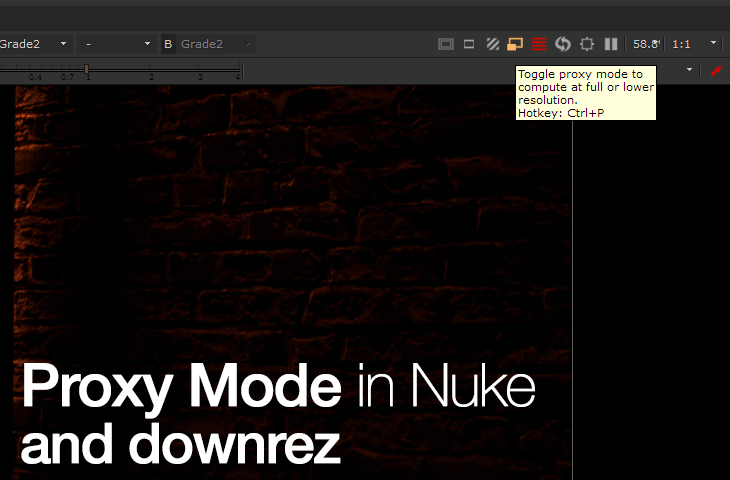

Nuke Tips – Proxy Mode in Nuke

Know when to use Proxy mode in Nuke to speed up your flipbook/preview process.

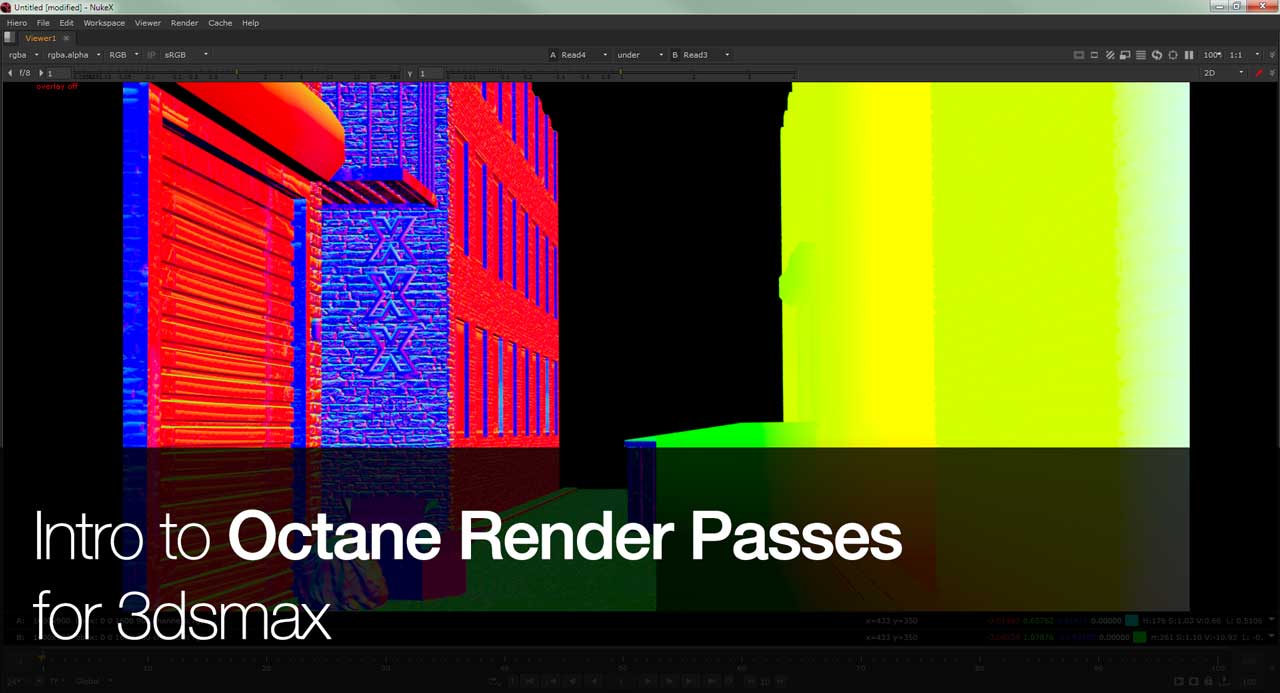

Intro to Octane Render Passes for 3dsmax

Intro to Octane Render Passes for 3dsmax. This will cover the essentials passes that is useful for compositing in application like Nuke.

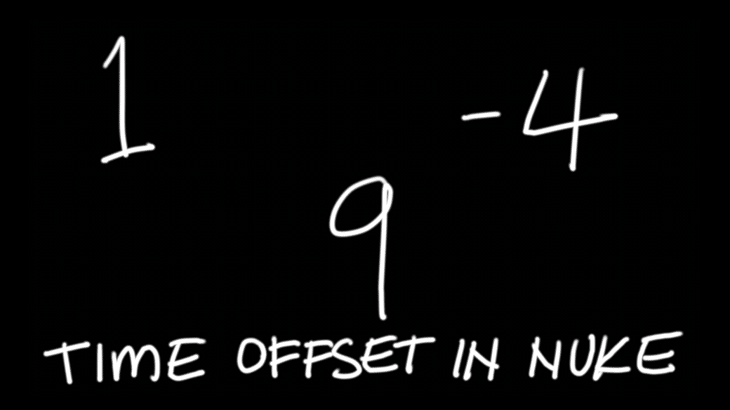

Nuke Tips – Time Offset

Back to basic with this simple explanation on how Time Offset in Nuke works.