Multiplying one to hundreds of files

In my early days of compositing, I usually work directly from the video container instead of processing it into image sequence.

Bad idea.

Yeah really bad idea.

Why work with image sequence?

For starter, image sequence allows one to directly know the amount of frames needed when working for VFX shot. So instead of working with a clip that is shot at 23.976fps for 3 secs, a whole number such as 71 frames is easier to digest for everyone. When working in a group, you can easily note the exact frame number to your members if they is any changes needed.

Image sequence is also faster to scrub compared to a video container which is critical when doing any compositing job. While some will argue that a several video container files is easier to managed compared to rumaging thousands of images, this is for VFX pipeline and not the editing process. Even then, editorial will work with footages that has been converted from the rendered VFX shots using intermediate codecs such as Apple ProRes.

In the end, one will not render any 3D scenes to a video container right? What happened if the render fail after rendering 40 out of 100 frames? If you render it as image sequence, you only need to render again from frame 41 to 100. If the output is a video container… well you need to render again from frame 1.

There is a thing called file format

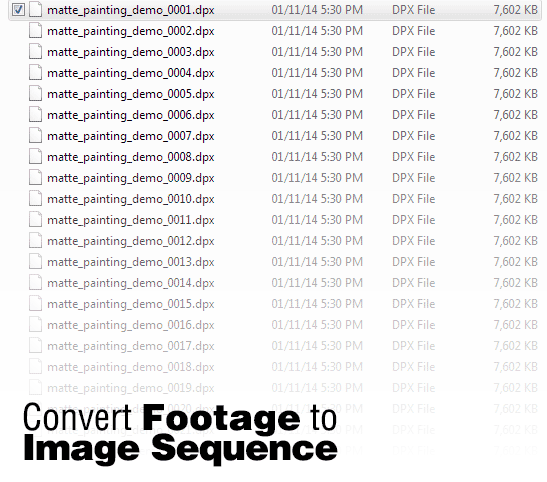

Before we start to convert footage to image sequence, one must understand the file format that is suitable to work with.

Generally DPX is a pretty common format once a shot has been conformed by the editorial for high-end VFX since it can hold higher bit depth plus it is very fast to read.

Since I mainly work with H.264 footages taken from a DSLR, I usually use PNG for really short sequence (less than 100 frames) since it can compress losslessly to smaller file size but with slower read speed. For long sequences, I used DPX as if you export it with linear colour space from Nuke, you will not get any funky colour on your display due to incorrect working colour space . Plus I can configure it as linear too in After Effects so the colour display as how I see it from the original H.264 footage. So yeah the reason for that is PNG will bottleneck your rendering speed unlike DPX for long sequences.

Also, don’t work with lossy file format such as JPEG. Understand?

I also cover more about file formats performance for image sequence read in both articles:

Image Sequence Read Speed in Nuke: Does File Format and Size Matters?

Comparing bit depth and format for Colour Grading

Below is a great video by MotionRevolver explaining the use of image sequence in After Effects: